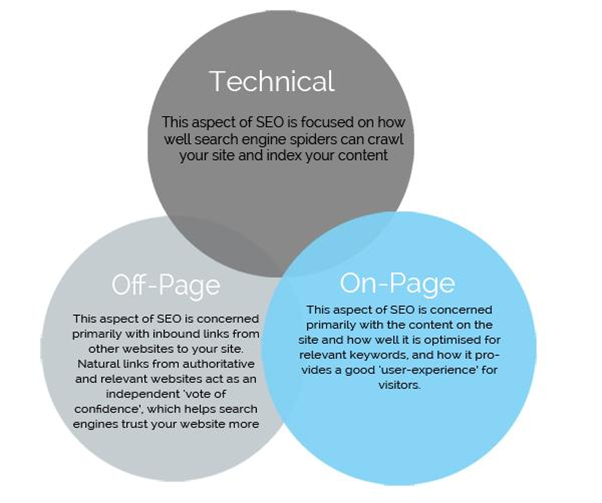

Unfortunately, most webmasters and SEO experts ignore the technical side or either leave it to plugins. While on-page and off-page SEO are crucial but without technical SEO, you cannot go too far.

There are several technical aspects of your website that needs special attention. Below I’ll talk about 4 of the most crucial and less talked about ones so you get to understand everything you need to know to rank better.

- Advanced Data Research

- Structured Data and Schema Markup for SEO

- Ensure Website Index Status

- Crawl Path Optimization

- XML Sitemaps/HTML Sitemap

- Secure Website

- Secure Certificate

- Check Metadata

- Title Tag

- Image Optimization

- Advanced Search Operators

- Optimize Site Speed

- Monitor Google Search Console Stats

1. Advanced Data Research

In this section, we’re going to look into the importance of structured data and schema markup for SEO.

So, let’s quickly get to it.

Structured Data and Schema Markup for SEO

You might have heard that using schema markup, or other kinds of structured data can help the search engines to have a better understanding of your site’s content, and also improve search visibility through featured snippets, Knowledge Graph results, and rich snippets.

To put succinctly, structured data is an excellent way to set up more search engine-friendly signals, which can impact search rankings indirectly.

John Mueller of Google recently confirmed that Google might eventually include structured data markup among their ranking factors. So it is absolutely necessary to implement schema markup on your site, as Google is taking it more importantly. Though for now, Google is still surfacing structured data organically.

There are basically three parts of a website which include:

- Markup

- Text

- Structured data

The Text consists of the content, the work of markup is to show the browser how the text should look, while structured data tells search engine spiders such as Google what the data is all about.

Furthermore, structured data doesn’t just enhance the look of your website, it can also assist the search engines in categorizing your website.

Most times, the advantages of structured data aren’t undeniable, but as your understanding of SEO improves, it becomes more apparent that it should be an essential part of any SEO campaign.

Below is an example of a local business with a markup on its event schedule page. The SERP entry looks like this:

The schema markup here sends a signal to Google spider to show a schedule of upcoming hotel events. That is exceptionally helpful for the user.

Here are some often used types of structured data:

- a blog post

- an article

- an event

- a website

- a recipe or a book

- a review

- a social media post

- website breadcrumbs

- a song, playlist, or album

And so on…

In all, there are numerous types of schema (including people, places, and things) ranging from broad topics to the more focused and specific ones.

Some of the really specific ones like “sports organization” or “airline” don’t really serve a direct purpose in SEO — but Google is regularly introducing new features, so it’s always advisable to mark up as much data as possible.

2. Ensure Website Index Status

Crawl Path Optimization

Crawl optimization is a technique refers to optimizing and prioritizing crawlability of your website to improve SEO. Google has assigned a specific crawl budget to every website.

Crawl budget refers to the maximum number of pages that Googlebot will crawl on any site.

Google determines crawl budget for every website pre-Caffeine era. Caffeine update changed everything.

After the Caffeine update, the crawl became incremental and a continuous process. Google now uses a new system called Percolator, and here is what it does with your crawling budget:

“We have built Percolator, a system for incrementally processing updates to a large data set, and deployed it to create the Google web search index. By replacing a batch-based indexing system with an indexing system based on incremental processing using Percolator, we process the same number of documents per day, while reducing the average age of documents in Google search results by 50%.”

It means Google now ensure that the most important pages on your website remain the freshest in the index and thus they’re crawled more often as compared to other less important pages.

So your website, and potentially every website out there, has a specific crawl budget that you have to optimize to ensure all the pages are crawled and indexed instead of a few important pages.

For instance, if your crawl budget is 50 times a day, Googlebot will visit your website 50 times every day for crawling. Here is what you can do to optimize the crawling process.

- Make the best use of the robots.txt file. Not sure how to make it work, here is a complete guide.

- Keep your entire website and all the pages crawlable.

- Keep website structure simple and complete (as discussed above).

- Avoid redirects.

- Interlink so that Googlebot can find new and inner pages on your website easily.

- Add new fresh content regularly.

- Create a sitemap.xml.

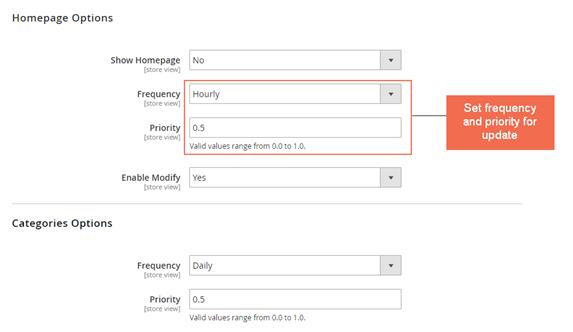

- XML Sitemaps/HTML Sitemap

- txt

XML Sitemaps/HTML Sitemap

When search engine spiders crawl a website, it means they are browsing around looking for fresh content and links to index (i.e., to add to their database).

If you do nothing other than publish fresh content and wait for search engine crawlers to come and index it, there’s nothing wrong with that. In fact, there are times when you allow bots to naturally find your new pages — assuming you’re not in a haste.

However, website crawlability can be induced in a way that helps search engines spiders to easily find your new pages.

Here’s exactly how increase the index status for your website:

i). Update Sitemap frequently: Make sure you update old sitemaps so that search engines can find the new updates you have made, and index your new pages. You could set update to hourly, daily, weekly, or monthly, depending on how frequently you publish new content on your website.

ii). Share newly published content on social media sites: Once a new post is live, tweet, share on Facebook, pin the image on Pinterest, and like it. Create a form of social engagement around the post. Given that search engine spiders visit social media sites every so often, it means that your newly shared post will be discovered easily.

iii). Ping your blog feed to Pingomatic: In the past, ping websites were very useful. But after the Google Panda and Penguin update, pinging services where no longer prominent.

But you can still use them to quickly notify search engines that your blog feed has been updated with a new post link. I use Pingomatic.com because it doesn’t duplicate your feed’s data, thereby manipulating index status, but rather, it signals the search directories to update their database with your page.

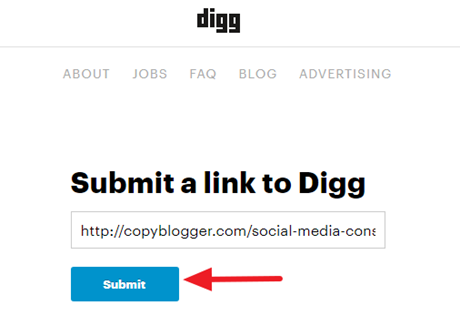

iv). Bookmark your new page on Reddit, Digg, and other Web 2.0 sites: Create accounts on both Digg and Reddit so that whenever you publish a new post, you can book them quickly.

Though Digg isn’t as popular as it used to be, but it’s still a delight for search bots. I personally found that my new post get indexed a few hours after bookmarking it.

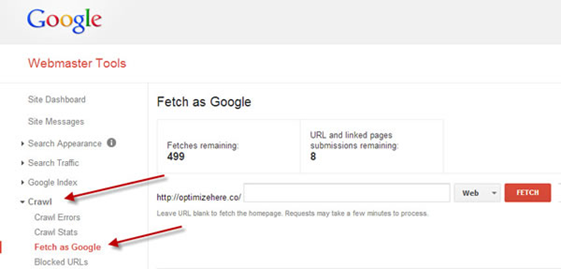

v). Use “fetch as Google” option in Google Search Console to update your new pages: This is important because it tells Google to fetch the exact page you want to quickly index.

Crawl path optimization is really concerned with the sitemap, and the robot.txt file which contains information that makes the search engine robot to either index or not index your page.

3. Secure Website

A successful website consists of a website that’s functional, easy to use, valuable, and above all secured for users.

In other to secure your website, you need a Secure Certificate or SSL certificate.

SSL Certificates are small data files that bing cryptographic key to a company’s details using digital phenomenon. The moment you install them on a web server, it activates the secure padlock and the https protocol, which, in turn, enables secure connections from a web server to a browser.

Typically, you use secure certificates on your website if you accept credit cards from users, all forms of financial transactions, data transfer and logins, and today this technology is growing in popularity when it comes to securing browsing of social media sites.

As marketers, we use SSL certificates to bind:

- A domain name, server name or hostname.

- An company identity (i.e. company name) and location.

You don’t have to set up a secure certificate on your website manually, you can use the services of your web host (the majority of them will do it for you) to activate the padlock and show your web visitors that your website is secure and safe for them.

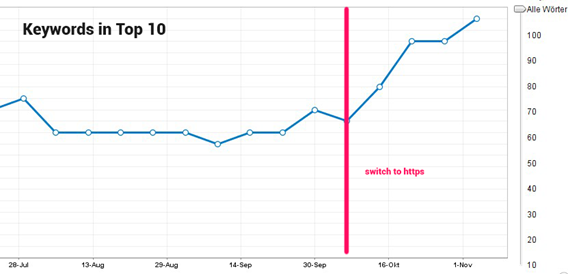

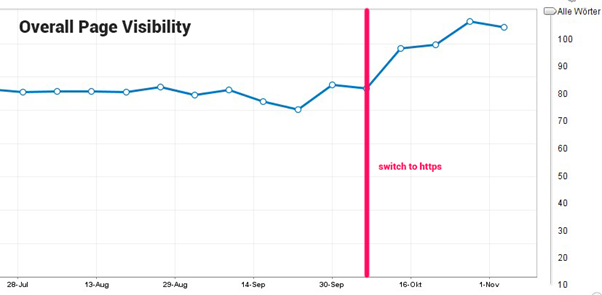

For SEO purposes, Google had announced the HTTPS is now a ranking factor.

When this announcement was made in 2014, website owners got confused. They want to know how HTTPS affects search rankings. Does having an encrypted website help you gain more organic visibility, they ask?

As little as 1.9% of the top 1 million websites redirect users to a default HTTPS/SSL version. Taking a look at the Quantcast top 10,000 websites – you will notice that a measly 4.2% have made the shift to this secure version. Overall, less than .1% of the websites on the internet are secure.

Switching to a secured website is important.

One of the major benefits of Hypertext Transfer Protocol Secure (HTTPS) is that it provides a secure connection for website users and help them to secure personal data.

You’re helping users feel at home especially when they share shares precious information like credit card details and passwords. The HTTPS will add extra layers of protection for the user.

If you want to sustain your ranking this year, you need to seriously consider switching from an unsecured HTTP where data is lost to HTTPS. Essentially, Google has stated that if all other factors are equal, HTTPS can impact your search engine results. As an example, Cloudtec recorded almost double the number of top 10 search engine rankings, after switching to HTTPS.

The company also improved their page visibility in the search engine.

Given the enormous benefits of installing and using an SSL Certificate on your website, there are limitations or downsides too.

I thought you should know about them.

a). SSL Certificate can be expensive: There are several solutions that gives you an SSL certificate for free, which can be helpful. But as you know, you get what you pay for.

If you choose to invest money on premium solutions, bear in mind that the costs can add up to $1,499/year if you choose an SSL certificate from a provider like Symantec. More so, if you have a website that receives a lot of traffic and has too many pages, then the costs associated with encrypting the transferred data can be quite significant.

Such high costs for an SSL certificate may be justifiable for large companies that have huge budgets, but for small business owners with limited budgets, they can’t afford it. Even if they can, it doesn’t make business sense.

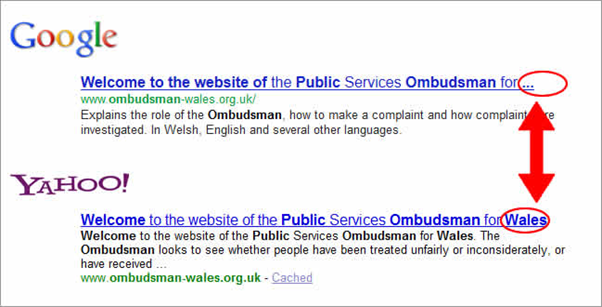

b). Duplicate content issues: One of the downsides to this is that if you activate the secure padlock the wrong way, then you might end up with duplicate content issues, such that both HTTP and HTTPS versions of your web page get indexed.

Consequently, this would result to different versions of the same web page getting indexed and showing up in the search engine results pages (SERPs).

In turn, this can be seen as a manipulative method of gaming search rankings, it will also confuse your visitors and lead to a negative user experience.

Now that we’ve had a glance at the benefits and challenges associated with HTTPS, let’s look at the data.

I decided to point out the negatives of switching from HTTP to HTTPS to help you decide exactly what you want. In the end, you should have a secure website but make sure it’s set up the proper way.

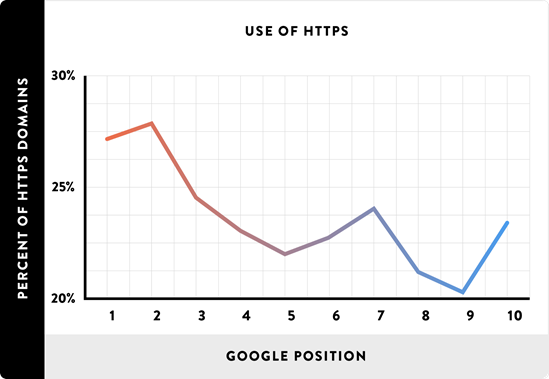

So, does HTTPS help with rankings? Is there a research to back up several claims online? Well, there is.

Brian Dean, founder of Backlinko teamed up with SimilarWeb, SEMrush, Ahrefs, and MarketMuse to analyze 1 million Google search results.

The results revealed that HTTPS is moderately correlated with higher search rankings on Google’s first page listings.

However, Brian gave two takeaways to help you maximize results with HTTPS:

He emphasized following a couple of pointers based on his analysis:

- There’s no need switching to HTTPS solely for SEO purposes. Why? Because it requires a rigorous process and there isn’t a strong correlation between the two.

- It’s always the best approach to have HTTPS in place from day 1 if you’re just starting a new website or blog. It’s a lot easier than implementing it on an existing website.

4. Check Metadata

Meta tags only exist in the head of the page, in HTML form. They don’t appear anywhere else. Meta tags are snippets of text that describe what the page’s content is about and help the user understand the information on the page from search engine results.

The “meta” stands for “metadata” which is the data on the tags that are found on website header.

Meta tags can help your SEO if you follow the right approach. Though not all the tags impact SEO, and the not all of the time too.

Let’s consider the most important meta tags that will help your search engine rankings below:

a). Meta Title or Title Tag: The title tag is the main title of a page, represented by the <title> element.

This is the most important meta tag. They’re visible to the average user, triggers click-through rate on the search engines, and helps search engine crawlers to understand the page better. If you’re a WordPress user, you can use the Yoast or All in One SEO plugins to add an optimized title tag to your page.

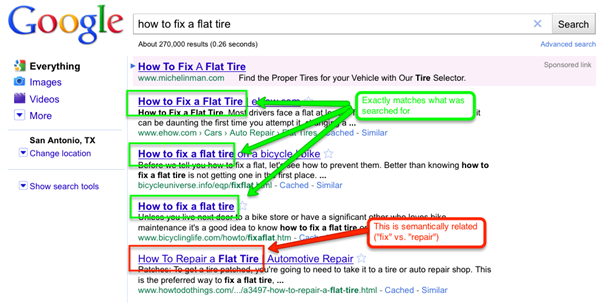

Here’s how the title tag looks like in the search engine results:

When writing your title tag, make sure it contains 50 characters or fewer. That way, search engines will show all the words in the title, and not dots (….). Here’s it in action:

The ideal length of your <title> will depend on the most-used device people use to access and view your website and its pages. But a rule of thumb is to keep it as short and descriptive as possible.

For example, if your website receives lots of mobile visitors, then go for a shorter title. It’s as simple as that. If you have more desktop users visiting your website, then increase length a bit. Or stick to the short titles because it will definitely appeal to users regardless of their device.

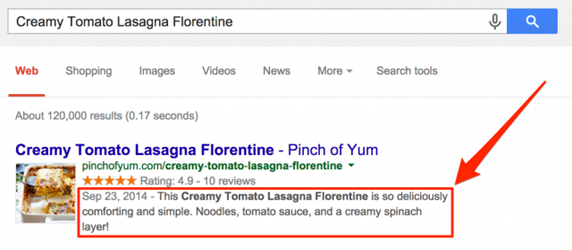

b). Meta description: The meta description is primarily the short description or summary of a particular page.

Few years ago, meta descriptions help search engines to know what the page was about. Today, Google will read the entire page and if you don’t specify the meta description, Google will use the introductory part of your content to describe what the page is about.

Thus, the meta description is a suggestion for the text displayed in Google’s search result pages. Here’s an example:

c). Image Optimization: “A picture is worth a thousand words”. Images are important in your content. If you’re a blogger, a website owner, a content creator, or a digital marketer, you will find this section useful.

When you add the right images in your page, it will help readers understand your content better.

If you can illustrate a data point in a chart, graph, or data flow diagram, it will definitely increased the perceived value of your blog posts or social media posts.

Aside from the typical image optimization tips which you’ve probably come across, here are other ways to optimize your images:

i). Prepare image for speed: Page loading times play an important role in UX and SEO. If your website loads up quickly, users will enjoy visiting it, and search engine bots will enjoy crawling and indexing it.

When it comes to image compression, you have to be careful as this can impact on loading times.

An example: using a 1850×1850 pixels image and displaying it at 180×150 pixels size. Though the entire image still has to be loaded, but it will pose a problem.

The best approach is to scale the image to the size you want to display to users. WordPress serves the image in multiple sizes after you upload already. Sadly, that’s just the image size.

The file size is still the original and not optimized for search engines. Search WordPress depository for image optimization plugins. There are more than a handful that you can use to optimize your images for speed.

In addition to using responsive images that displays well on any device screen, here’s what to do next…

ii). Reduce file size: For your images to help improve your search visibility, make sure they are scaled in the smallest file size possible. There are so many tools for the trade.

Better yet, you could simply export the image and gauge the quality that’s acceptable. I recommend you use 100% quality images as standard.

To reduce the file size of these images, you can simply remove the EXIF data. We recommend using tools like ImageOptim or websites like JPEGMini, Kraken.io or , ImageResize.org or PunyPNG.

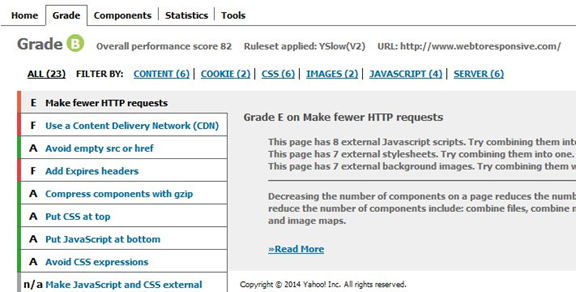

After reducing image file size, you should use tools like YSlow to check if your image optimization worked.

iii). Alt Text and Title Text: The alt text (or alt tags) is added to an image to describe the image when it’s not displayed properly to the visitor. Wikipedia has a better illustration:

“In situations where the image is not available to the reader, perhaps because they have turned off images in their web browser or are using a screen reader due to a visual impairment, the alternative text ensures that no information or functionality is lost.”

Your images need alt texts. Include your target keyword in the alt text to help describe the image better for both search engines and users. But don’t get involved in keyword stuffing.

The Title Text for images performs a similar role as the alt text. More and more people simply leave these out. If you don’t want to waste time, you could use the same text copy to optimize both sections.

The purpose of the title text is to provide non-essential information about the image, such as what the image stands for, or the context.

d). URL Optimization

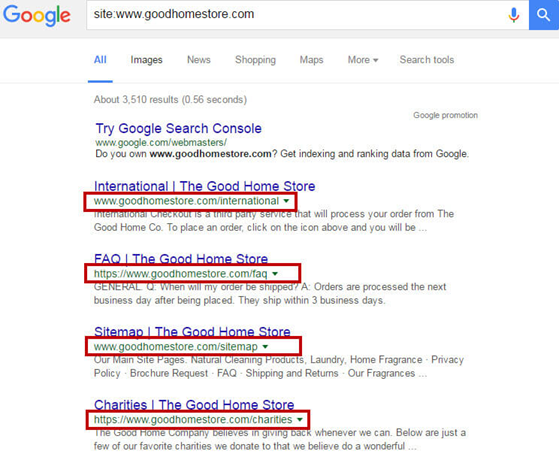

Advanced Search Operators: There are several advanced search operators that help you get access to a wealth of knowledge that can help you with SEO. These operators help SEO experts’ very specific information that cannot be achieved with general queries.

Here is a list of some of the advanced search operators for SEO with their details.

Site: You can use this operator to explore any website, its indexed pages, and more.

Use the following search query:

site:domainname.com

The uses of this operator are potentially unlimited for SEO purpose. Here are some main ones:

- Use it to find indexed pages in Google.

- Use site:domainname.com keyword to find a list of posts and pages on the specific keyword on the domain.

- Find specific writers or contributors on a site by adding your desired keyword after the operator.

- Search images on a site or maybe infographics or videos.

Cache: It helps you find out the most recent cache of a website which shows you when it was last crawled.

Here is the syntax:

cache:domainname.com

The best way to use this operator is to add word cache: before the URL of the page in the address bar. This will show you the cached version of the page along with a date when it was last crawled.

Easy, right?

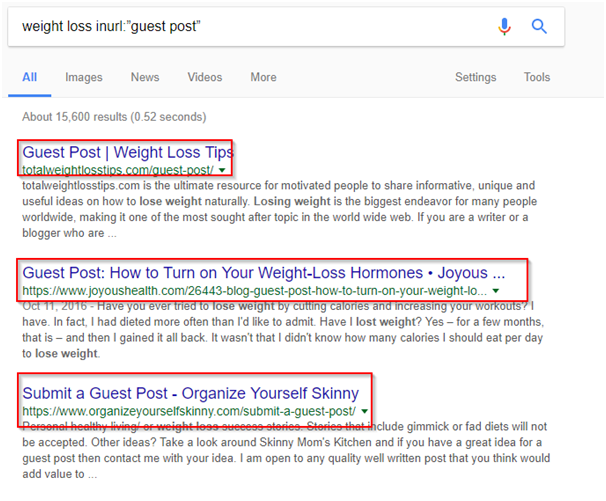

inurl: It helps you find websites that have a specific term or phrase in their URL. It can be used to find pretty much anything on the internet if you know how to use it.

For instance, if you’re looking for sites that accept guest posts. There are fair enough chances that the words ‘guest’ and/or ‘post’ will be used in the URL for all such websites and web pages.

Makes sense, right?

You can use inurl to find exactly these pages. Run the following query:

inurl:“guest post”

Refine the search query to find guest posting opportunities in your niche.

weight loss inurl:”guest post”

Works well. You can use guest post without commas too.

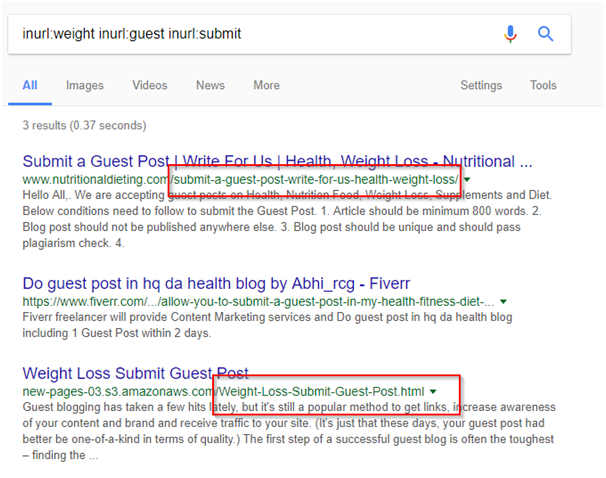

For best results, you can use multiple inurl operators in a single search query to refine your search.

inurl:weight inurl:guest inurl:submit

There are several ways you can use inurl. For instance, to find content in a specific niche, in a specific country, by a specific author, and more.

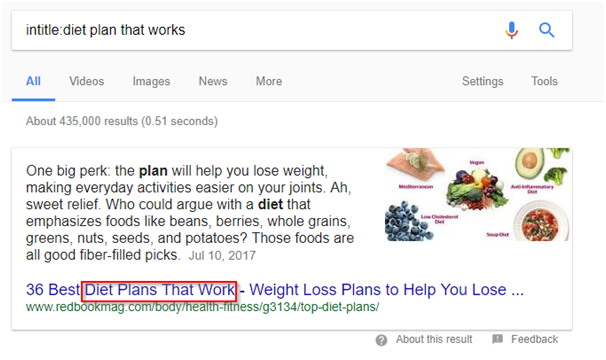

intitle: Just like inurl, intitle helps you find web pages having specific words and phrases in the title. Here is how to use it:

intitle:diet plan that works

To better refine your search, you should use quotations.

Here is a list of more operators to help you get started.

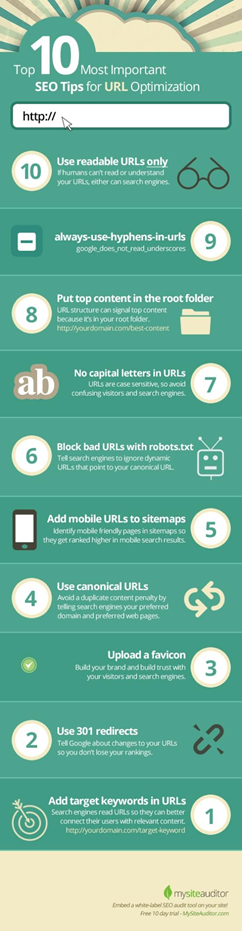

URL Optimization: Optimizing URLs is part of website’s structure. It helps both Googlebot as well as your customers to get a better understanding of what a page is about and where it is located.

Google provides three quick tips to improve URL structure:

- Use words in URLs to make them readable.

- Create a simple directory structure to organize content and pages on your website.

- Make sure that there is only one version of a URL to reach a specific page on your website.

This provides you with a decent starting point to URL optimization but there is more to it. The following infographic doesn’t just make URL optimization simple but visually pleasing.

5. Optimize Site Speed

The speed of a website matters. If your website is slow, you’re doing your business a disservice.

Did you know that a 1-second delay in page load time yields:

- 7% loss in conversions, according to Aberdeen Group?

- 11% fewer page views?

- 16% decrease in customer satisfaction?

Sure, it does. In the real world, speed kills. But when it comes to website conversions, speed keeps alive.

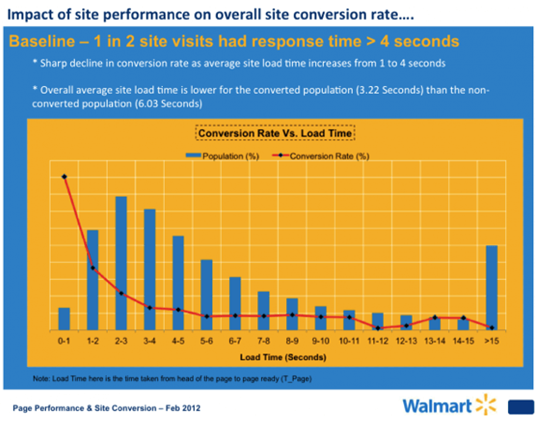

Amazon, the giant shopping site increased their revenue by 1% for every 100 milliseconds improvement to their site speed.

Walmart took it a step further and recorded a 2% increase in conversions for every 1 second of improvement.

As much as possible, keep increasing your website load speed. Why? Because 47% of people expect a web page to load in two seconds or less.

With all of these statistics, it makes sense to increase your page speed.

Here are 3 proven ways to speed up your website loading times:

i). Minimize HTTPS Requests: Earlier, we discussed the importance of having a secure website. Another negative attribute of an SSL certificate is that it can slow down a page.

Data from Yahoo! Revealed that 80% of a web page’s load time is spent on downloads. Given there are different parts of the page that must be downloaded:

- Images

- Stylesheets

- Scripts

- Flash, etc.

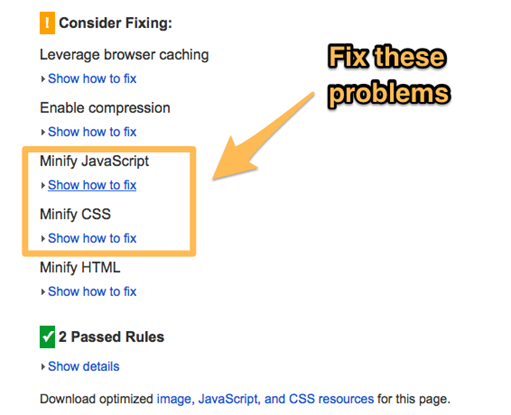

Unfortunately, each of these elements sends an HTTPS request which slows down page loading time. This equally means that the more elements a page has, the slower it will be — because there will be too many HTTPS requests going on at the same time. You can minify javascript and CSS to reduce these requests.

Having said that, you can also speed up your page speed by simplifying your design. Here are proven ways to go about it:

- Streamline the number of elements a given page has.

- Use CSS to frame your pages instead of images.

- Use multiple style sheets and combine them into one sheet.

- Use scripts but make sure they are at the bottom of the page.

ii). Reduce server response time: You want to achieve a server response time of less than 200ms (milliseconds). Don’t worry, the tips on this guide will help you achieve it.

According to Google, you should use a web application monitoring solution and check for bottlenecks in performance frequently. That’s the best way to understand how the server works, and ultimately, how you can enhance response time.

iii). Use compression: If you have been comprehensive content for a little over 6 months, then you have several large pages that are often 100kb and more. These bulky pages are responsibility for slowing down your download when a request is sent to the server.

The good news is, you can compress these pages to speed up loading time.

Compression helps to reduce your pages’ bandwidth, thereby reducing HTTP response. You can achieve this with a simple tool called Gzip.

Most of the popular web servers can compress your files in Gzip format before sending them for download. There are two options it can do this:

- Calling a third-party module or

- Using built-in routines.

According to Yahoo study, both options can reduce download time by about 70%.

Since 90% of today’s web traffic travels through browsers that support Gzip, you have to consider it an option for speeding up your website.

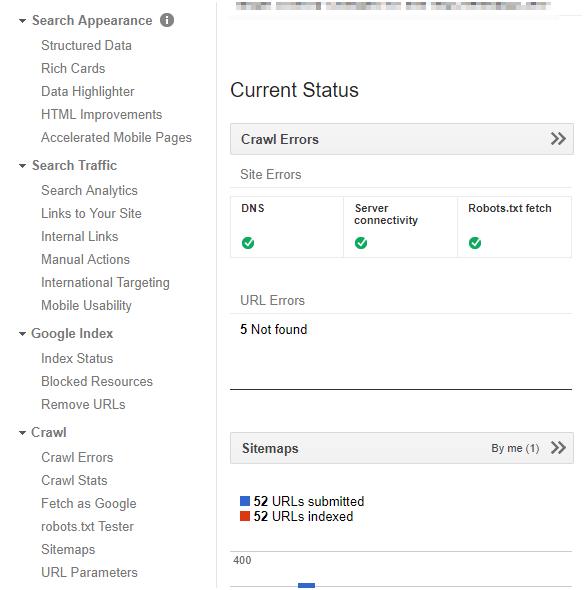

6. Monitor Google Search Console Stats

Are you registered with Google Search Console?

It is a free web-based tool for webmasters to help them monitor website performance in Google. Here are some of the main features:

- Search appearance information

- Search traffic

- Technical SEO status

- SEO related updates

- Crawl and indexing data

- Website performance in search engine

- Optimization tips and suggestions

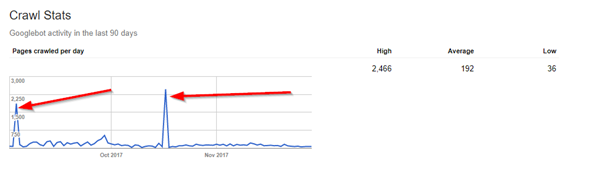

The Crawl Stats and Crawl Error features are indeed helpful that can be used to see how many pages Googlebot crawls per day on your website. Any spikes or drop in the pages crawled per day needs further investigation.

Besides crawl rate, you can check index status, submit new pages for instant indexation, analyze search traffic including clicks, impressions, CTR, and average position.

There is indeed a wealth of information sitting there for you to explore. Don’t miss it.

Make the best use of it.

Site Structure for SEO

Competitor Analysis for SEO